Two Years in Silence

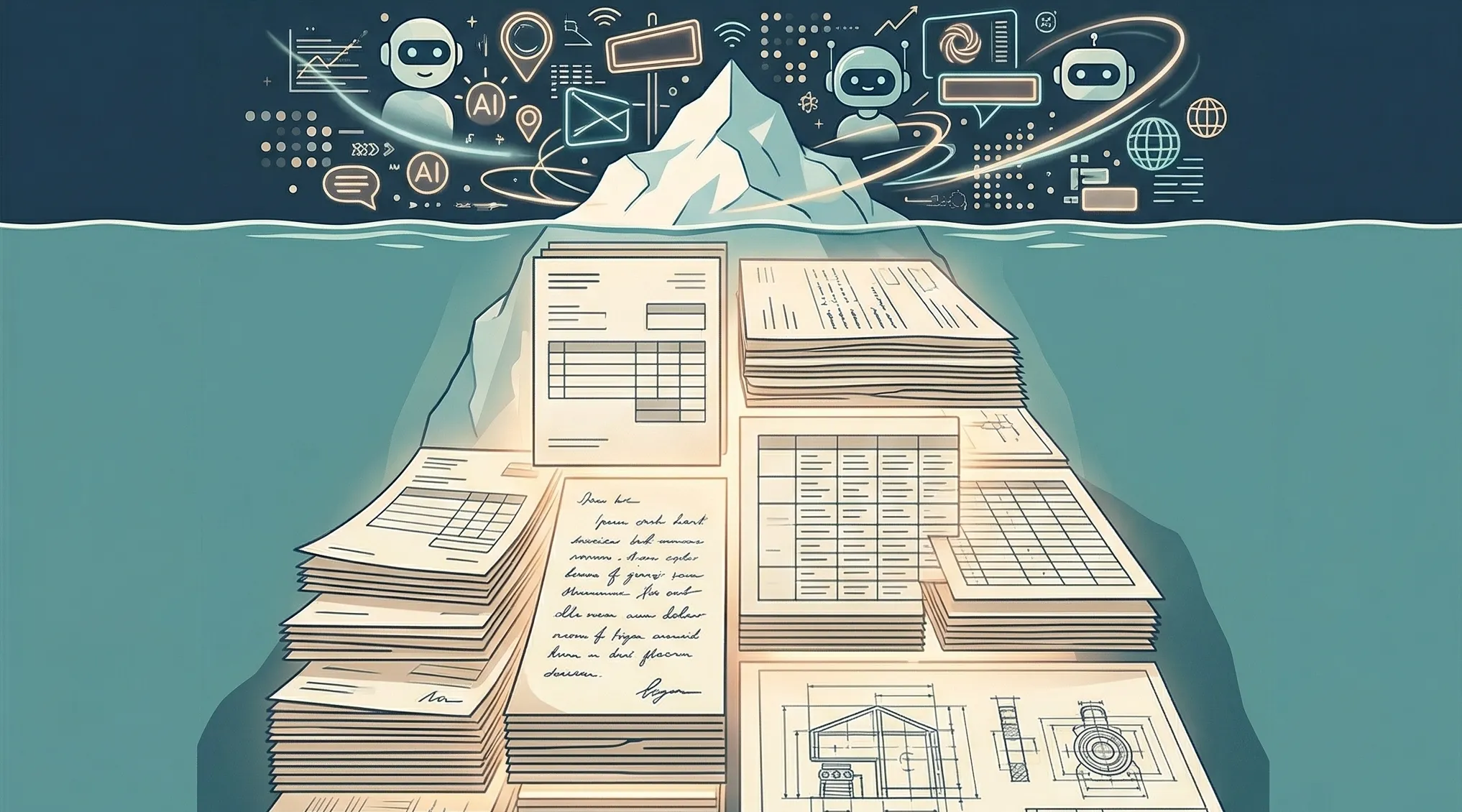

Billions went into smarter models. Almost nothing went into reading the documents enterprises actually run on. We spent two years on that.

The first time we fed a real enterprise document into a state-of-the-art AI system, the result was almost comical. The document was a pharmaceutical regulatory submission, sixty pages of tables, footnotes, multi-column layouts, and handwritten annotations in the margins. The model extracted maybe half the content correctly. Table headers were hallucinated. Footnotes blurred into body text. The handwritten reviewer notes might as well not have existed.

This was the best available technology. It could write poetry, pass a bar exam, and debug code in twelve languages. It could not read the documents that our client's organization actually ran on.

Enterprise Knowledge, Locked Away

That gap defined everything that followed. Over years of deploying AI systems inside European enterprises, my co-founders and I saw the same pattern in every organization we worked with. The knowledge was there. Buried in thousands of documents across SharePoint folders, legacy systems, shared drives, and filing cabinets that someone had scanned to PDF years ago. It held operational procedures refined over decades, engineering specifications representing millions in R&D investment, compliance frameworks built through years of regulatory negotiation. An entire institutional memory, sitting in formats no search system could properly read.

Consider a team of engineers preparing a bid for a large infrastructure project. The technical specifications they need exist in reports from three similar projects completed over the past decade. Those reports contain load calculations, material specifications, lessons learned from field inspections. Finding them means searching across multiple document management systems, opening dozens of PDFs, scanning pages manually for the relevant tables and figures. The engineer who worked on those projects retired last year. Nobody else knows where to look. So the team spends two weeks partially recreating analysis that already existed, because the organization's own knowledge was locked inside documents no system could properly search.

Now picture a compliance officer at a financial institution who needs to answer a specific regulatory question. The answer exists, spread across three policy documents, two internal memos, and a set of guidelines updated last quarter. She knows this because she wrote one of those memos. Finding the current version of each, confirming nothing has been superseded, and assembling the complete answer takes most of a working day. Tomorrow a colleague in another office will face a similar question and start from scratch, unaware the work was already done.

The Gap Nobody Invested In

The technology to solve this has existed, in theory, for years. Language models can reason, summarize, and answer questions with remarkable sophistication. Retrieval systems can search through thousands of documents in seconds. The capability was never the bottleneck. The bottleneck was the step everyone glossed over: getting the information out of the documents accurately in the first place.

Enterprise documents are not clean text. They are scanned pages with uneven lighting and skewed columns. Tables where cell borders are implied by spacing, not by lines. PDFs where a single page contains three different layouts. Handwritten notes in margins that contradict the printed text beside them. Engineering drawings with embedded annotations. Forms filled out by hand decades ago and photocopied until the ink faded. This is not a formatting inconvenience. This is the actual substrate of enterprise knowledge, and any system that cannot read it faithfully is useless for the organizations that need it most.

The AI industry never prioritized this problem. Billions went into training larger models, improving reasoning benchmarks, building chat interfaces. Almost nothing went into making enterprise documents machine-readable at the fidelity those organizations require. The reason is simple economics: consumer AI and startup applications run on clean digital text, web pages, and structured databases. Messy document understanding was a hard problem with a narrow market. Nobody invested in solving it properly.

The result was a strange paradox. AI systems that could reason brilliantly over information they were never properly given. Models sophisticated enough to synthesize answers across hundreds of sources, paired with document processing so crude it lost half the information before the model ever saw it. Organizations kept hearing that AI would transform their operations, and kept discovering that the transformation broke down the moment it encountered a scanned table or a multi-column regulatory filing.

What We Built

That is the problem we set out to solve. Two years ago, we started building Axelered with a conviction that the missing piece in enterprise AI was not a better model or a smarter prompt. It was the infrastructure layer that sits between an organization's actual documents and the intelligence that can reason over them. Document understanding that handles the hard cases: the scanned legacy archives, the complex table structures, the mixed layouts that defeated every existing system. Processing that preserves context across sections, maintains table relationships, reads annotations, and turns messy real-world documents into structured, queryable knowledge.

We chose to do this in silence. Not because we wanted mystique, but because the problem demanded it. Building document processing that works on the formats enterprises actually have, at the quality their decisions depend on, is painstaking engineering. There are no shortcuts. Every document type presents different challenges. Each industry has formats and conventions that require specific understanding. We needed time to get it right, and we refused to ship until it was.

Now it is ready.

The Platform

The platform turns an organization's document corpus into a knowledge base that anyone can use. Business teams get natural-language search and document intelligence that works from day one. Ask a question in plain language, get an answer that cites the specific paragraphs and pages it drew from. No training required, no technical setup. For engineering teams, Axelered is API-first with fully documented endpoints and complete developer tooling. Build custom applications, integrate document intelligence into existing workflows, automate processes that currently depend on someone manually finding and reading the right file. The same knowledge infrastructure serves both audiences because the hard work, understanding the documents, happens once at the foundation layer.

Every answer the system generates traces back to its source. Not a vague reference to a document title, but a direct link to the specific section, table, or paragraph the answer was built from. When someone asks why the system gave a particular answer, the evidence is there, inspectable and verifiable. This is not a chatbot generating plausible guesses. It is a knowledge system that shows its work.

We also made an architectural decision early that shaped the entire platform: the processing pipeline runs without external dependencies. Document ingestion, text extraction, embedding generation, search, and answer generation all operate locally, deployable as a physical appliance in a client's datacenter, inside a private cloud, or as a managed service on EU-sovereign infrastructure. We built it this way because the organizations that need the best document understanding are often the same organizations that cannot send their documents anywhere else. Sovereignty is not our headline. It is a natural consequence of building for the customers who need this most.

Out of Stealth

Today, Axelered steps out of stealth. We are here because the product is ready, tested, and proven on the kinds of documents that break other systems. Not because the timing is convenient, but because we spent two years earning the right to make this claim: your organization's knowledge does not have to be trapped in documents anymore. It can be queried, surfaced, verified, and put to work by anyone who needs it.

The organizations that will pull ahead in the next decade are not the ones with access to the most powerful model. Everyone will have that. The advantage will belong to those whose AI understands their own accumulated knowledge deeply enough to be trusted, with evidence attached. That requires solving the unglamorous, technically demanding problem of actually reading the documents. It is the problem we spent two years on. And we are ready to show what we built.